From Black Box to

Clear Boundaries

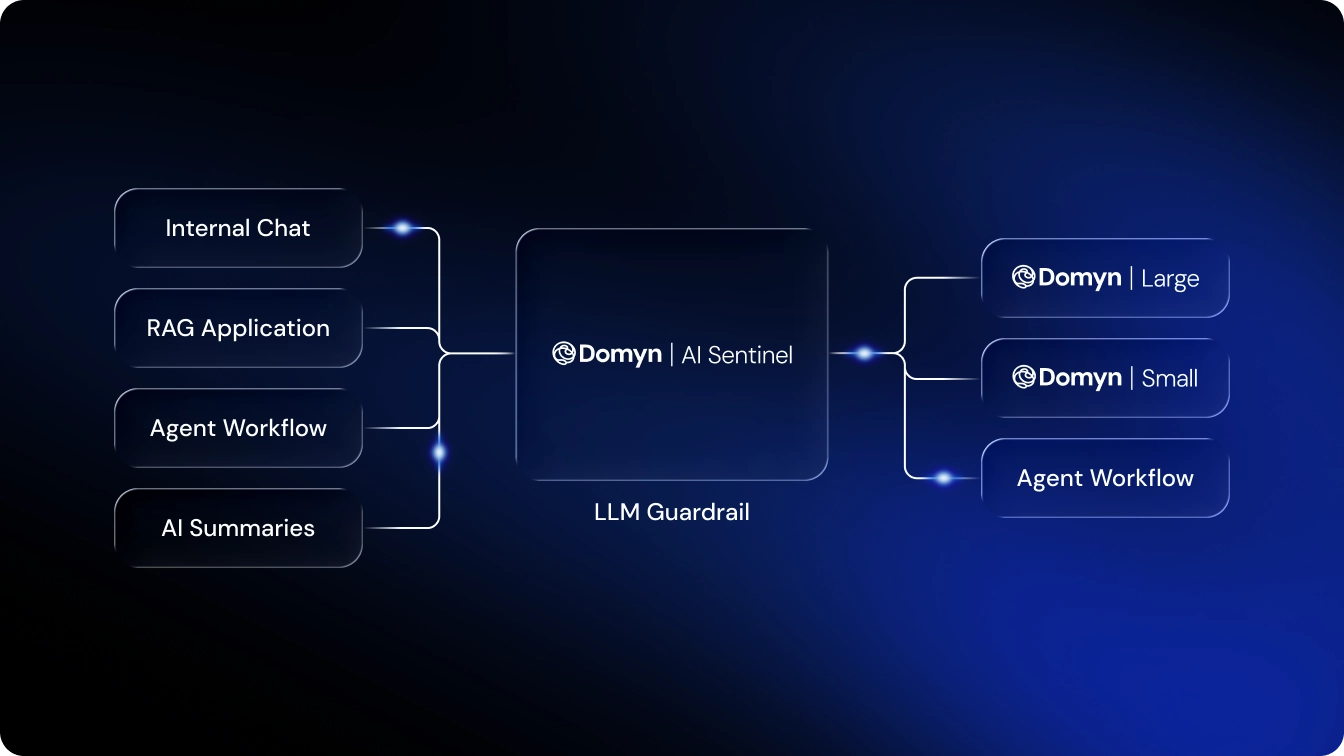

LLM guardrails are the control mechanisms that make LLMs safe, aligned, and reliable. They define and enforce boundaries on what a model can generate, preventing harmful outputs, protecting sensitive data, and ensuring responses meet ethical, regulatory, and business standards.