Domyn Large launches on Microsoft Foundry

Read more

AI Sentinel deploys guardrail models that detect, intercept, and neutralize threats—built on deep expertise in the regulations, context, and risks of each sector.

Traditional moderation tools were designed for static content and narrow classification tasks. They struggle to interpret context, adapt to evolving language, and provide the transparency required for production AI systems. AI Sentinel introduces an independent governance and evaluation layer for generative models.

It assesses outputs against safety policies, regulatory rules, and operational constraints, returning structured decisions with supporting signals. Because it operates outside the generation process, Sentinel can enforce consistent governance across any model.

Real-world applications

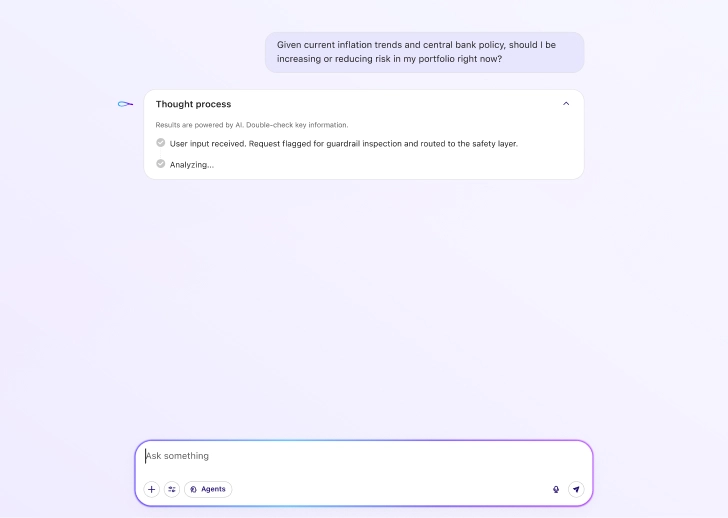

AI Sentinel analyzes AI interactions as they occur in real workflows. Inputs and outputs pass through a structured evaluation pipeline that detects risks and determines governance actions.

AI pricing and valuation models can drift from market reality and misguide decisions without anyone noticing. AI Sentinel monitors model performance, flags drift and unreliable outputs, and halts automated actions in real time.

Poorly validated AI tools can miss high-risk patients and deliver unsafe recommendations. AI Sentinel monitors model outputs, flags unreliable predictions, and enforces clinician review in real time.

Automated control systems can act on faulty sensor data and overpower operator inputs before anyone reacts. AI Sentinel verifies input signals, blocks unsafe automated actions, and triggers human override in real time.

When AI decides who gets benefits, credit, or scrutiny, unchecked bias can penalize populations with no way to appeal. AI Sentinel audits fairness, flags discriminatory patterns, and halts high-impact decisions in real time.

User or system content enters the guardrail model before being processed or delivered

An independent LLM assesses the content using a defined set of safety and policy criteria.

The evaluation returns a structured verdict with a confidence score and clear explanation.

Configured thresholds determine whether content is allowed, filtered, blocked, or flagged.

Every interaction is treated as a decision point.

Before content is processed or delivered, it is evaluated against a defined safety framework, scored, classified, and paired with clear reasoning. This ensures every outcome is consistent, explainable, and fully controlled.

Deploy AI Sentinel across your AI systems through flexible APIs supporting real-time and asynchronous workflows.

Apply domain-specific governance to any model—proprietary or open-source—without retraining your models.